Head-driven phrase structure grammar

Head-driven phrase structure grammar (HPSG) is a highly lexicalized, constraint-based grammar[1] developed by Carl Pollard and Ivan Sag.[2][3] It is a type of phrase structure grammar, as opposed to a dependency grammar, and it is the immediate successor to generalized phrase structure grammar. HPSG draws from other fields such as computer science (data type theory and knowledge representation) and uses Ferdinand de Saussure's notion of the sign. It uses a uniform formalism and is organized in a modular way which makes it attractive for natural language processing.

An HPSG grammar includes principles and grammar rules and lexicon entries which are normally not considered to belong to a grammar. The formalism is based on lexicalism. This means that the lexicon is more than just a list of entries; it is in itself richly structured. Individual entries are marked with types. Types form a hierarchy. Early versions of the grammar were very lexicalized with few grammatical rules (schema). More recent research has tended to add more and richer rules, becoming more like construction grammar.[4]

The basic type HPSG deals with is the sign. Words and phrases are two different subtypes of sign. A word has two features: [PHON] (the sound, the phonetic form) and [SYNSEM] (the syntactic and semantic information), both of which are split into subfeatures. Signs and rules are formalized as typed feature structures.

Contents

Sample grammar[edit]

HPSG generates strings by combining signs, which are defined by their location within a type hierarchy and by their internal feature structure, represented by attribute value matrices (AVMs). [3][5] Features take types or lists of types as their values, and these values may in turn have their own feature structure. Grammatical rules are largely expressed through the constraints signs place on one another. A sign's feature structure describes its phonological, syntactic, and semantic properties. In common notation, AVMs are written with features in upper case and types in italicized lower case. Numbered indices in an AVM represent token identical values.

In the simplified AVM for the word "walks" below, the verb's categorical information (CAT) is divided into features that describe it (HEAD) and features that describe its arguments (VALENCE).

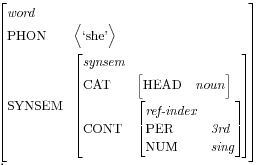

"Walks" is a sign of type word with a head of type verb. As an intransitive verb, "walks" has no complement but requires a subject that is a third person singular noun. The semantic value of the subject (CONTENT) is co-indexed with the verb's only argument (the individual doing the walking). The following AVM for "she" represents a sign with a SYNSEM value that could fulfill those requirements.

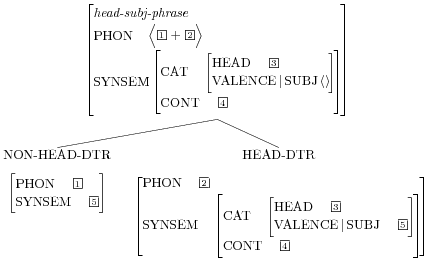

Signs of type phrase unify with one or more children and propagate information upward. The following AVM encodes the immediate dominance rule for a head-subj-phrase, which requires two children: the head child (a verb) and a non-head child that fulfills the verb's SUBJ constraints.

The end result is a sign with a verb head, empty subcategorization features, and a phonological value that orders the two children.

Although the actual grammar of HPSG is composed entirely of feature structures, linguists often use trees to represent the unification of signs where the equivalent AVM would be unwieldy.

Implementations[edit]

Various parsers based on the HPSG formalism have been written and optimizations are currently being investigated. An example of a system analyzing German sentences is provided by the Freie Universität Berlin.[6] In addition the CoreGram[7] project of the Grammar Group of the Freie Universität Berlin provides open source grammars that were implemented in the TRALE system. Currently there are grammars for German,[8] Danish,[9] Mandarin Chinese,[10] Maltese,[11] and Persian[12] that share a common core and are publicly available.

Large HPSG grammars of various languages are being developed in the Deep Linguistic Processing with HPSG Initiative (DELPH-IN).[13] Wide-coverage grammars of English,[14] German,[15] and Japanese[16] are available under an open-source license. These grammars can be used with a variety of inter-compatible open-source HPSG parsers: LKB, PET,[17] Ace,[18] and agree.[19] All of these produce semantic representations in the format of “Minimal Recursion Semantics,” MRS.[20] The declarative nature of the HPSG formalism means that these computational grammars can typically be used for both parsing and generation (producing surface strings from semantic inputs). Treebanks, also distributed by DELPH-IN, are used to develop and test the grammars, as well as to train ranking models to decide on plausible interpretations when parsing (or realizations when generating).

Enju is a freely available wide-coverage probabilistic HPSG parser for English developed by the Tsujii Laboratory at The University of Tokyo in Japan.[21]

See also[edit]

- Lexical-functional grammar

- Minimal recursion semantics

- Relational grammar

- Syntax

- Transformational grammar

- Type Description Language

References[edit]

- ^ https://www.acsu.buffalo.edu/~rchaves/hpsg-ideas.html. Missing or empty

|title=(help)[permanent dead link] - ^ Pollard, Carl, and Ivan A. Sag. 1987. Information-based syntax and semantics. Volume 1. Fundamentals. CLSI Lecture Notes 13.

- ^ a b Pollard, Carl; Ivan A. Sag. (1994). Head-driven phrase structure grammar. Chicago: University of Chicago Press.

- ^ Sag, Ivan A. 1997. English Relative Clause Constructions[permanent dead link]. Journal of Linguistics . 33.2: 431-484

- ^ Sag, Ivan A.; Thomas Wasow; & Emily Bender. (2003). Syntactic theory: a formal introduction. 2nd ed. Chicago: University of Chicago Press.

- ^ The Babel-System: HPSG Interactive

- ^ The CoreGram Project

- ^ Berligram

- ^ DanGram

- ^ Chinese

- ^ Maltese

- ^ Persian

- ^ DELPH-IN: Open-Source Deep Processing

- ^ English Resource Grammar and Lexicon

- ^ Berthold Crysmann

- ^ JacyTop - Deep Linguistic Processing with HPSG (DELPH-IN)

- ^ DELPH-IN PET parser

- ^ Ace: the Answer Constraint Engine

- ^ agree grammar engineering

- ^ Copestake, A., Flickinger, D., Pollard, C., & Sag, I. A. (2005). Minimal recursion semantics: An introduction. Research on Language and Computation, 3(2-3), 281-332.

- ^ Tsuji Lab: Enju parser home page Archived 2010-03-07 at the Wayback Machine (retrieved Nov 24, 2009)

Further reading[edit]

- Carl Pollard, Ivan A. Sag (1987): Information-based Syntax and Semantics. Volume 1: Fundamentals. Stanford: CSLI Publications.

- Carl Pollard, Ivan A. Sag (1994): Head-Driven Phrase Structure Grammar. Chicago: University of Chicago Press. ([1])

- Ivan A. Sag, Thomas Wasow, Emily M. Bender (2003): Syntactic Theory: a formal introduction, Second Edition. Chicago: University of Chicago Press. ([2])

- Levine, Robert D.; W. Detmar Meurers (2006). "Head-Driven Phrase Structure Grammar: Linguistic Approach, Formal Foundations, and Computational Realization" (PDF). In Keith Brown. Encyclopedia of Language and Linguistics (second ed.). Oxford: Elsevier. Archived from the original (PDF) on 2008-09-05. Retrieved 2008-03-07.

- Müller, Stefan (2013). "Unifying Everything: Some Remarks on Simpler Syntax, Construction Grammar, Minimalism and HPSG". Language. doi:10.1353/lan.2013.0061.

External links[edit]

- Stanford HPSG homepage – includes on-line proceedings of an annual HPSG conference

- Ohio State HPSG homepage

- International Conference on Head-Driven Phrase Structure Grammar

- DELPH-IN network for HPSG grammar development

- Basic Overview of HPSG

- Comparison of HPSG with alternatives, and a historical perspective

- Bibliography of HPSG Publications

- LaTeX package for drawing AVMs – includes documentation