Long short-term memory

| Machine learning and data mining |

|---|

|

|

Machine-learning venues |

Long short-term memory (LSTM) units are units of a recurrent neural network (RNN). An RNN composed of LSTM units is often called an LSTM network (or just LSTM). A common LSTM unit is composed of a cell, an input gate, an output gate and a forget gate. The cell remembers values over arbitrary time intervals and the three gates regulate the flow of information into and out of the cell.

LSTM networks are well-suited to classifying, processing and making predictions based on time series data, since there can be lags of unknown duration between important events in a time series. LSTMs were developed to deal with the exploding and vanishing gradient problems that can be encountered when training traditional RNNs. Relative insensitivity to gap length is an advantage of LSTM over RNNs, hidden Markov models and other sequence learning methods in numerous applications[citation needed].

Contents

History[edit]

LSTM was proposed in 1997 by Sepp Hochreiter and Jürgen Schmidhuber.[1] By introducing Constant Error Carousel (CEC) units, LSTM deals with the exploding and vanishing gradient problems.The initial version of LSTM block included cells, input and output gates.[2]

In 1999, Felix Gers introduced the forget gate (also called “keep gate”) into LSTM architecture, enabling the LSTM to reset its own state.[2]

In 2000, Felix Gers & Jürgen Schmidhuber added peephole connections (connections from the cell to the gates) into the architecture.[3] Additionally, the output activation function was omitted.[2]

In 2014, Kyunghyun Cho et al. put forward a simplified variant called Gated recurrent unit (GRU).[4]

Among other successes, LSTM achieved record results in natural language text compression,[5] unsegmented connected handwriting recognition[6] and won the ICDAR handwriting competition (2009). LSTM networks were a major component of a network that achieved a record 17.7% phoneme error rate on the classic TIMIT natural speech dataset (2013).[7]

As of 2016, major technology companies including Google, Apple, and Microsoft were using LSTM as fundamental components in new products.[8] For example, Google used LSTM for speech recognition on the smartphone,[9][10] for the smart assistant Allo[11] and for Google Translate.[12][13] Apple uses LSTM for the "Quicktype" function on the iPhone[14][15] and for Siri.[16] Amazon uses LSTM for Amazon Alexa.[17]

In 2017 researchers from Michigan State University, IBM Research, and Cornell University published a study in the Knowledge Discovery and Data Mining (KDD) conference.[18][19][20] Their study describes a novel neural network that performs better than the widely used long short-term memory neural network.

Further in 2017 Microsoft reported reaching 95.1% recognition accuracy on the Switchboard corpus, incorporating a vocabulary of 165,000 words. The approach used "dialog session-based long-short-term memory".[21]

Idea[edit]

In theory, classic (or "vanilla") RNNs can keep track of arbitrary long-term dependencies in the input sequences. The problem of vanilla RNNs is computational (or practical) in nature: when training a vanilla RNN using back-propagation, the gradients which are back-propagated can "vanish" (that is, they can tend to zero) or "explode" (that is, they can tend to infinity), because of the computations involved in the process, which use finite-precision numbers. RNNs using LSTM units partially solve the vanishing gradient problem, because LSTM units allow gradients to also flow unchanged. However, LSTM networks can still suffer from the exploding gradient problem[22].

Architecture[edit]

There are several architectures of LSTM units. A common architecture is composed of a cell (the memory part of the LSTM unit) and three "regulators", usually called gates, of the flow of information inside the LSTM unit: an input gate, an output gate and a forget gate. Some variations of the LSTM unit do not have one or more of these gates or maybe have other gates. For example, gated recurrent units (GRUs) do not have an output gate.

Intuitively, the cell is responsible for keeping track of the dependencies between the elements in the input sequence. The input gate controls the extent to which a new value flows into the cell, the forget gate controls the extent to which a value remains in the cell and the output gate controls the extent to which the value in the cell is used to compute the output activation of the LSTM unit. The activation function of the LSTM gates is often the logistic function.

There are connections into and out of the LSTM gates, a few of which are recurrent. The weights of these connections, which need to be learned during training, determine how the gates operate.

Variants[edit]

In the equations below, the lowercase variables represent vectors. Matrices and contain, respectively, the weights of the input and recurrent connections, where the subscript can either be the input gate , output gate , the forget gate or the memory cell , depending on the activation being calculated. In this section, we are thus using a "vector notation". So, for example, is not just one cell of one LSTM unit, but contains LSTM unit's cells.

LSTM with a forget gate[edit]

The compact forms of the equations for the forward pass of an LSTM unit with a forget gate are:[1][3]

where the initial values are and and the operator denotes the Hadamard product (element-wise product). The subscript indexes the time step.

Variables[edit]

- : input vector to the LSTM unit

- : forget gate's activation vector

- : input gate's activation vector

- : output gate's activation vector

- : hidden state vector also known as output vector of the LSTM unit

- : cell state vector

- , and : weight matrices and bias vector parameters which need to be learned during training

where the superscripts and refer to the number of input features and number of hidden units, respectively.

Activation functions[edit]

- : sigmoid function.

- : hyperbolic tangent function.

- : hyperbolic tangent function or, as the peephole LSTM paper[23][24] suggests, .

Peephole LSTM[edit]

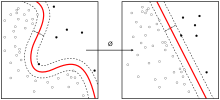

The figure on the right is a graphical representation of an LSTM unit with peephole connections (i.e. a peephole LSTM).[23][24] Peephole connections allow the gates to access the constant error carousel (CEC), whose activation is the cell state.[25] is not used, is used instead in most places.

Peephole convolutional LSTM[edit]

Peephole convolutional LSTM.[26] The denotes the convolution operator.

Training[edit]

To minimize LSTM's total error on a set of training sequences, an optimization algorithm, like gradient descent, combined with backpropagation through time to compute the gradients needed during the optimization process, is often employed, so as to change each weight of the LSTM network in proportion to the derivative of the error (at the output layer of the LSTM network) with respect to corresponding weight.

A problem with using gradient descent for standard RNNs is that error gradients vanish exponentially quickly with the size of the time lag between important events. This is due to if the spectral radius of is smaller than 1.[27][28]

However, with LSTM units, when error values are back-propagated from the output layer, the error remains in the LSTM unit's cell. This "error carousel" continuously feeds error back to each of the LSTM unit's gates, until they learn to cut off the value.

CTC score function[edit]

Many applications use stacks of LSTM RNNs[29] and train them by connectionist temporal classification (CTC)[30] to find an RNN weight matrix that maximizes the probability of the label sequences in a training set, given the corresponding input sequences. CTC achieves both alignment and recognition.

Applications[edit]

Applications of LSTM include:

- Robot control[31]

- Time series prediction[32]

- Speech recognition[33][34][35]

- Rhythm learning[24]

- Music composition[36]

- Grammar learning[37][23][38]

- Handwriting recognition[39][40]

- Human action recognition[41]

- Sign Language Translation[42]

- Protein Homology Detection[43]

- Predicting subcellular localization of proteins[44]

- Time series anomaly detection[45]

- Several prediction tasks in the area of business process management[46]

- Prediction in medical care pathways[47]

- Semantic parsing[48]

- Object Co-segmentation[49][50]

See also[edit]

- Recurrent neural network

- Gated recurrent unit

- Differentiable neural computer

- Long-term potentiation

- Prefrontal cortex basal ganglia working memory

- Time series

References[edit]

- ^ a b Sepp Hochreiter; Jürgen Schmidhuber (1997). "Long short-term memory". Neural Computation. 9 (8): 1735–1780. doi:10.1162/neco.1997.9.8.1735. PMID 9377276.

- ^ a b c d Klaus Greff; Rupesh Kumar Srivastava; Jan Koutník; Bas R. Steunebrink; Jürgen Schmidhuber (2015). "LSTM: A Search Space Odyssey". IEEE Transactions on Neural Networks and Learning Systems. 28 (10): 2222–2232. arXiv:1503.04069. doi:10.1109/TNNLS.2016.2582924. PMID 27411231.

- ^ a b Felix A. Gers; Jürgen Schmidhuber; Fred Cummins (2000). "Learning to Forget: Continual Prediction with LSTM". Neural Computation. 12 (10): 2451–2471. CiteSeerX 10.1.1.55.5709. doi:10.1162/089976600300015015.

- ^ Cho, Kyunghyun; van Merrienboer, Bart; Gulcehre, Caglar; Bahdanau, Dzmitry; Bougares, Fethi; Schwenk, Holger; Bengio, Yoshua (2014). "Learning Phrase Representations using RNN Encoder-Decoder for Statistical Machine Translation". arXiv:1406.1078 [cs.CL].

- ^ "The Large Text Compression Benchmark". Retrieved 2017-01-13.

- ^ Graves, A.; Liwicki, M.; Fernández, S.; Bertolami, R.; Bunke, H.; Schmidhuber, J. (May 2009). "A Novel Connectionist System for Unconstrained Handwriting Recognition". IEEE Transactions on Pattern Analysis and Machine Intelligence. 31 (5): 855–868. CiteSeerX 10.1.1.139.4502. doi:10.1109/tpami.2008.137. ISSN 0162-8828. PMID 19299860.

- ^ Graves, Alex; Mohamed, Abdel-rahman; Hinton, Geoffrey (2013-03-22). "Speech Recognition with Deep Recurrent Neural Networks". arXiv:1303.5778 [cs.NE].

- ^ "With QuickType, Apple wants to do more than guess your next text. It wants to give you an AI". WIRED. 2016-06-14. Retrieved 2016-06-16.

- ^ Beaufays, Françoise (August 11, 2015). "The neural networks behind Google Voice transcription". Research Blog. Retrieved 2017-06-27.

- ^ Sak, Haşim; Senior, Andrew; Rao, Kanishka; Beaufays, Françoise; Schalkwyk, Johan (September 24, 2015). "Google voice search: faster and more accurate". Research Blog. Retrieved 2017-06-27.

- ^ Khaitan, Pranav (May 18, 2016). "Chat Smarter with Allo". Research Blog. Retrieved 2017-06-27.

- ^ Wu, Yonghui; Schuster, Mike; Chen, Zhifeng; Le, Quoc V.; Norouzi, Mohammad; Macherey, Wolfgang; Krikun, Maxim; Cao, Yuan; Gao, Qin (2016-09-26). "Google's Neural Machine Translation System: Bridging the Gap between Human and Machine Translation". arXiv:1609.08144 [cs.CL].

- ^ Metz, Cade (September 27, 2016). "An Infusion of AI Makes Google Translate More Powerful Than Ever | WIRED". Wired. Retrieved 2017-06-27.

- ^ Efrati, Amir (June 13, 2016). "Apple's Machines Can Learn Too". The Information. Retrieved 2017-06-27.

- ^ Ranger, Steve (June 14, 2016). "iPhone, AI and big data: Here's how Apple plans to protect your privacy | ZDNet". ZDNet. Retrieved 2017-06-27.

- ^ Smith, Chris (2016-06-13). "iOS 10: Siri now works in third-party apps, comes with extra AI features". BGR. Retrieved 2017-06-27.

- ^ Vogels, Werner (30 November 2016). "Bringing the Magic of Amazon AI and Alexa to Apps on AWS. - All Things Distributed". www.allthingsdistributed.com. Retrieved 2017-06-27.

- ^ "Patient Subtyping via Time-Aware LSTM Networks" (PDF). msu.edu. Retrieved 21 Nov 2018.

- ^ "Patient Subtyping via Time-Aware LSTM Networks". Kdd.org. Retrieved 24 May 2018.

- ^ "SIGKDD". Kdd.org. Retrieved 24 May 2018.

- ^ Haridy, Rich (August 21, 2017). "Microsoft's speech recognition system is now as good as a human". newatlas.com. Retrieved 2017-08-27.

- ^ bro, n. "Why can RNNs with LSTM units also suffer from "exploding gradients"?". Cross Validated. Retrieved 25 December 2018.

- ^ a b c Gers, F. A.; Schmidhuber, J. (2001). "LSTM Recurrent Networks Learn Simple Context Free and Context Sensitive Languages" (PDF). IEEE Transactions on Neural Networks. 12 (6): 1333–1340. doi:10.1109/72.963769. PMID 18249962.

- ^ a b c Gers, F.; Schraudolph, N.; Schmidhuber, J. (2002). "Learning precise timing with LSTM recurrent networks" (PDF). Journal of Machine Learning Research. 3: 115–143.

- ^ Gers, F. A.; Schmidhuber, E. (November 2001). "LSTM recurrent networks learn simple context-free and context-sensitive languages" (PDF). IEEE Transactions on Neural Networks. 12 (6): 1333–1340. doi:10.1109/72.963769. ISSN 1045-9227. PMID 18249962.

- ^ Xingjian Shi; Zhourong Chen; Hao Wang; Dit-Yan Yeung; Wai-kin Wong; Wang-chun Woo (2015). "Convolutional LSTM Network: A Machine Learning Approach for Precipitation Nowcasting". Proceedings of the 28th International Conference on Neural Information Processing Systems: 802–810. arXiv:1506.04214. Bibcode:2015arXiv150604214S.

- ^ S. Hochreiter. Untersuchungen zu dynamischen neuronalen Netzen. Diploma thesis, Institut f. Informatik, Technische Univ. Munich, 1991.

- ^ Hochreiter, S.; Bengio, Y.; Frasconi, P.; Schmidhuber, J. (2001). "Gradient Flow in Recurrent Nets: the Difficulty of Learning Long-Term Dependencies (PDF Download Available)". In Kremer and, S. C.; Kolen, J. F. A Field Guide to Dynamical Recurrent Neural Networks. IEEE Press.

- ^ Fernández, Santiago; Graves, Alex; Schmidhuber, Jürgen (2007). "Sequence labelling in structured domains with hierarchical recurrent neural networks". Proc. 20th Int. Joint Conf. On Artificial In℡ligence, Ijcai 2007: 774–779. CiteSeerX 10.1.1.79.1887.

- ^ Graves, Alex; Fernández, Santiago; Gomez, Faustino (2006). "Connectionist temporal classification: Labelling unsegmented sequence data with recurrent neural networks". In Proceedings of the International Conference on Machine Learning, ICML 2006: 369–376. CiteSeerX 10.1.1.75.6306.

- ^ Mayer, H.; Gomez, F.; Wierstra, D.; Nagy, I.; Knoll, A.; Schmidhuber, J. (October 2006). A System for Robotic Heart Surgery that Learns to Tie Knots Using Recurrent Neural Networks. 2006 IEEE/RSJ International Conference on Intelligent Robots and Systems. pp. 543–548. CiteSeerX 10.1.1.218.3399. doi:10.1109/IROS.2006.282190. ISBN 978-1-4244-0258-8.

- ^ Wierstra, Daan; Schmidhuber, J.; Gomez, F. J. (2005). "Evolino: Hybrid Neuroevolution/Optimal Linear Search for Sequence Learning". Proceedings of the 19th International Joint Conference on Artificial Intelligence (IJCAI), Edinburgh: 853–858.

- ^ Graves, A.; Schmidhuber, J. (2005). "Framewise phoneme classification with bidirectional LSTM and other neural network architectures". Neural Networks. 18 (5–6): 602–610. CiteSeerX 10.1.1.331.5800. doi:10.1016/j.neunet.2005.06.042. PMID 16112549.

- ^ Fernández, Santiago; Graves, Alex; Schmidhuber, Jürgen (2007). An Application of Recurrent Neural Networks to Discriminative Keyword Spotting. Proceedings of the 17th International Conference on Artificial Neural Networks. ICANN'07. Berlin, Heidelberg: Springer-Verlag. pp. 220–229. ISBN 978-3540746935.

- ^ Graves, Alex; Mohamed, Abdel-rahman; Hinton, Geoffrey (2013). "Speech Recognition with Deep Recurrent Neural Networks". Acoustics, Speech and Signal Processing (ICASSP), 2013 IEEE International Conference on: 6645–6649.

- ^ Eck, Douglas; Schmidhuber, Jürgen (2002-08-28). Learning the Long-Term Structure of the Blues. Artificial Neural Networks — ICANN 2002. Lecture Notes in Computer Science. 2415. Springer, Berlin, Heidelberg. pp. 284–289. CiteSeerX 10.1.1.116.3620. doi:10.1007/3-540-46084-5_47. ISBN 978-3540460848.

- ^ Schmidhuber, J.; Gers, F.; Eck, D.; Schmidhuber, J.; Gers, F. (2002). "Learning nonregular languages: A comparison of simple recurrent networks and LSTM". Neural Computation. 14 (9): 2039–2041. CiteSeerX 10.1.1.11.7369. doi:10.1162/089976602320263980. PMID 12184841.

- ^ Perez-Ortiz, J. A.; Gers, F. A.; Eck, D.; Schmidhuber, J. (2003). "Kalman filters improve LSTM network performance in problems unsolvable by traditional recurrent nets". Neural Networks. 16 (2): 241–250. CiteSeerX 10.1.1.381.1992. doi:10.1016/s0893-6080(02)00219-8. PMID 12628609.

- ^ A. Graves, J. Schmidhuber. Offline Handwriting Recognition with Multidimensional Recurrent Neural Networks. Advances in Neural Information Processing Systems 22, NIPS'22, pp 545–552, Vancouver, MIT Press, 2009.

- ^ Graves, Alex; Fernández, Santiago; Liwicki, Marcus; Bunke, Horst; Schmidhuber, Jürgen (2007). Unconstrained Online Handwriting Recognition with Recurrent Neural Networks. Proceedings of the 20th International Conference on Neural Information Processing Systems. NIPS'07. USA: Curran Associates Inc. pp. 577–584. ISBN 9781605603520.

- ^ M. Baccouche, F. Mamalet, C Wolf, C. Garcia, A. Baskurt. Sequential Deep Learning for Human Action Recognition. 2nd International Workshop on Human Behavior Understanding (HBU), A.A. Salah, B. Lepri ed. Amsterdam, Netherlands. pp. 29–39. Lecture Notes in Computer Science 7065. Springer. 2011

- ^ Huang, Jie; Zhou, Wengang; Zhang, Qilin; Li, Houqiang; Li, Weiping (2018-01-30). "Video-based Sign Language Recognition without Temporal Segmentation". arXiv:1801.10111 [cs.CV].

- ^ Hochreiter, S.; Heusel, M.; Obermayer, K. (2007). "Fast model-based protein homology detection without alignment". Bioinformatics. 23 (14): 1728–1736. doi:10.1093/bioinformatics/btm247. PMID 17488755.

- ^ Thireou, T.; Reczko, M. (2007). "Bidirectional Long Short-Term Memory Networks for predicting the subcellular localization of eukaryotic proteins". IEEE/ACM Transactions on Computational Biology and Bioinformatics (TCBB). 4 (3): 441–446. doi:10.1109/tcbb.2007.1015. PMID 17666763.

- ^ Malhotra, Pankaj; Vig, Lovekesh; Shroff, Gautam; Agarwal, Puneet (April 2015). "Long Short Term Memory Networks for Anomaly Detection in Time Series" (PDF). European Symposium on Artificial Neural Networks, Computational Intelligence and Machine Learning — ESANN 2015.

- ^ Tax, N.; Verenich, I.; La Rosa, M.; Dumas, M. (2017). Predictive Business Process Monitoring with LSTM neural networks. Proceedings of the International Conference on Advanced Information Systems Engineering (CAiSE). Lecture Notes in Computer Science. 10253. pp. 477–492. arXiv:1612.02130. doi:10.1007/978-3-319-59536-8_30. ISBN 978-3-319-59535-1.

- ^ Choi, E.; Bahadori, M.T.; Schuetz, E.; Stewart, W.; Sun, J. (2016). "Doctor AI: Predicting Clinical Events via Recurrent Neural Networks". Proceedings of the 1st Machine Learning for Healthcare Conference: 301–318. arXiv:1511.05942. Bibcode:2015arXiv151105942C.

- ^ Jia, Robin; Liang, Percy (2016-06-11). "Data Recombination for Neural Semantic Parsing". arXiv:1606.03622 [cs].

- ^ Wang, Le; Duan, Xuhuan; Zhang, Qilin; Niu, Zhenxing; Hua, Gang; Zheng, Nanning (2018-05-22). "Segment-Tube: Spatio-Temporal Action Localization in Untrimmed Videos with Per-Frame Segmentation" (PDF). Sensors. 18 (5): 1657. doi:10.3390/s18051657. ISSN 1424-8220. PMC 5982167. PMID 29789447.

- ^ Duan, Xuhuan; Wang, Le; Zhai, Changbo; Zheng, Nanning; Zhang, Qilin; Niu, Zhenxing; Hua, Gang (2018). Joint Spatio-Temporal Action Localization in Untrimmed Videos with Per-Frame Segmentation. 25th IEEE International Conference on Image Processing (ICIP). doi:10.1109/icip.2018.8451692. ISBN 978-1-4799-7061-2.

External links[edit]

- Recurrent Neural Networks with over 30 LSTM papers by Jürgen Schmidhuber's group at IDSIA

- Gers, Felix (2001). "Long Short-Term Memory in Recurrent Neural Networks" (PDF). PhD thesis.

- Gers, Felix A.; Schraudolph, Nicol N.; Schmidhuber, Jürgen (Aug 2002). "Learning precise timing with LSTM recurrent networks" (PDF). Journal of Machine Learning Research. 3: 115–143.

- Abidogun, Olusola Adeniyi (2005). "Data Mining, Fraud Detection and Mobile Telecommunications: Call Pattern Analysis with Unsupervised Neural Networks". Master's Thesis. hdl:11394/249. Archived (PDF) from the original on May 22, 2012.

- original with two chapters devoted to explaining recurrent neural networks, especially LSTM.

- Monner, Derek D.; Reggia, James A. (2010). "A generalized LSTM-like training algorithm for second-order recurrent neural networks" (PDF).

High-performing extension of LSTM that has been simplified to a single node type and can train arbitrary architectures

- Herta, Christian. "How to implement LSTM in Python with Theano". Tutorial.

- Chevalier, Guillaume. Tutorial: How to use LSTMs with TensorFlow in Python on cellphone sensor data on GitHub