Motor theory of speech perception

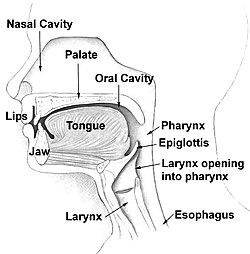

The motor theory of speech perception is the hypothesis that people perceive spoken words by identifying the vocal tract gestures with which they are pronounced rather than by identifying the sound patterns that speech generates.[1][2][3][4][5] It originally claimed that speech perception is done through a specialized module that is innate and human-specific. Though the idea of a module has been qualified in more recent versions of the theory,[5] the idea remains that the role of the speech motor system is not only to produce speech articulations but also to detect them.

The hypothesis has gained more interest outside the field of speech perception than inside. This has increased particularly since the discovery of mirror neurons that link the production and perception of motor movements, including those made by the vocal tract.[5][6]

The theory was initially proposed in the Haskins Laboratories in the 1950s by Alvin Liberman and Franklin S. Cooper, and developed further by Donald Shankweiler, Michael Studdert-Kennedy, Ignatius Mattingly, Carol Fowler and Douglas Whalen.

Contents

Origins and development[edit]

The hypothesis has its origins in research using pattern playback to create reading machines for the blind that would substitute sounds for orthographic letters.[7] This led to a close examination of how spoken sounds correspond to the acoustic spectrogram of them as a sequence of auditory sounds. This found that successive consonants and vowels overlap in time with one another (a phenomenon known as coarticulation).[8][9][10] This suggested that speech is not heard like an acoustic "alphabet" or "cipher," but as a "code" of overlapping speech gestures.

Associationist approach[edit]

Initially, the theory was associationist: infants mimic the speech they hear and that this leads to behavioristic associations between articulation and its sensory consequences. Later, this overt mimicry would be short-circuited and become speech perception.[9] This aspect of the theory was dropped, however, with the discovery that prelinguistic infants could already detect most of the phonetic contrasts used to separate different speech sounds.[1]

Cognitivist approach[edit]

The behavioristic approach was replaced by a cognitivist one in which there was a speech module.[1] The module detected speech in terms of hidden distal objects rather than at the proximal or immediate level of their input. The evidence for this was the research finding that speech processing was special such as duplex perception.[11]

Changing distal objects[edit]

Initially, speech perception was assumed to link to speech objects that were both

- the invariant movements of speech articulators[9]

- the invariant motor commands sent to muscles to move the vocal tract articulators[12]

This was later revised to include the phonetic gestures rather than motor commands,[1] and then the gestures intended by the speaker at a prevocal, linguistic level, rather than actual movements.[13]

Modern revision[edit]

The "speech is special" claim has been dropped,[5] as it was found that speech perception could occur for nonspeech sounds (for example, slamming doors for duplex perception).[14]

Mirror neurons[edit]

The discovery of mirror neurons has led to renewed interest in the motor theory of speech perception, and the theory still has its advocates,[5] although there are also critics.[15]

Support[edit]

Nonauditory gesture information[edit]

If speech is identified in terms of how it is physically made, then nonauditory information should be incorporated into speech percepts even if it is still subjectively heard as "sounds". This is, in fact, the case.

- The McGurk effect shows that seeing the production of a spoken syllable that differs from an auditory cue synchronized with it affects the perception of the auditory one. In other words, if someone hears "ba" but sees a video of someone pronouncing "ga", what they hear is different—some people believe they hear "da".

- People find it easier to hear speech in noise if they can see the speaker.[16]

- People can hear syllables better when their production can be felt haptically.[17]

Categorical perception[edit]

Using a speech synthesizer, speech sounds can be varied in place of articulation along a continuum from /bɑ/ to /dɑ/ to /ɡɑ/, or in voice onset time on a continuum from /dɑ/ to /tɑ/ (for example). When listeners are asked to discriminate between two different sounds, they perceive sounds as belonging to discrete categories, even though the sounds vary continuously. In other words, 10 sounds (with the sound on one extreme being /dɑ/ and the sound on the other extreme being /tɑ/, and the ones in the middle varying on a scale) may all be acoustically different from one another, but the listener will hear all of them as either /dɑ/ or /tɑ/. Likewise, the English consonant /d/ may vary in its acoustic details across different phonetic contexts (the /d/ in /du/ does not technically sound the same as the one in /di/, for example), but all /d/'s as perceived by a listener fall within one category (voiced alveolar plosive) and that is because "linguistic representations are abstract, canonical, phonetic segments or the gestures that underlie these segments."[18] This suggests that humans identify speech using categorical perception, and thus that a specialized module, such as that proposed by the motor theory of speech perception, may be on the right track.[19]

Speech imitation[edit]

If people can hear the gestures in speech, then the imitation of speech should be very fast, as in when words are repeated that are heard in headphones as in speech shadowing.[20] People can repeat heard syllables more quickly than they would be able to produce them normally.[21]

Speech production[edit]

- Hearing speech activates vocal tract muscles,[22] and the motor cortex[23] and premotor cortex.[24] The integration of auditory and visual input in speech perception also involves such areas.[25]

- Disrupting the premotor cortex disrupts the perception of speech units such as plosives.[26]

- The activation of the motor areas occurs in terms of the phonemic features which link with the vocal track articulators that create speech gestures.[27]

- The perception of a speech sound is aided by pre-emptively stimulating the motor representation of the articulators responsible for its pronunciation .[28]

- Auditory and motor cortical coupling is restricted to a specific range of neuronal firing frequency.[29]

Perception-action meshing[edit]

Evidence exists that perception and production are generally coupled in the motor system. This is supported by the existence of mirror neurons that are activated both by seeing (or hearing) an action and when that action is carried out.[30] Another source of evidence is that for common coding theory between the representations used for perception and action.[31]

Criticisms[edit]

The motor theory of speech perception is not widely held in the field of speech perception, though it is more popular in other fields, such as theoretical linguistics. As three of its advocates have noted, "it has few proponents within the field of speech perception, and many authors cite it primarily to offer critical commentary".[5]p. 361 Several critiques of it exist.[32]

Multiple sources[edit]

Speech perception is affected by nonproduction sources of information, such as context. Individual words are hard to understand in isolation but easy when heard in sentence context. It therefore seems that speech perception uses multiple sources that are integrated together in an optimal way.[32]

Production[edit]

The motor theory of speech perception would predict that speech motor abilities in infants predict their speech perception abilities, but in actuality it is the other way around.[34] It would also predict that defects in speech production would impair speech perception, but they do not.[35] However, this only affects the first and already superseded behaviorist version of the theory, where infants were supposed to learn all production-perception patterns by imitation early in childhood. This is no longer the mainstream view of motor-speech theorists.

Speech module[edit]

Several sources of evidence for a specialized speech module have failed to be supported.

- Duplex perception can be observed with door slams.[14]

- The McGurk effect can also be achieved with nonlinguistic stimuli, such as showing someone a video of a basketball bouncing but playing the sound of a ping-pong ball bouncing.[citation needed]

- As for categorical perception, listeners can be sensitive to acoustic differences within single phonetic categories.

As a result, this part of the theory has been dropped by some researchers.[5]

Sublexical tasks[edit]

The evidence provided for the motor theory of speech perception is limited to tasks such as syllable discrimination that use speech units not full spoken words or spoken sentences. As a result, "speech perception is sometimes interpreted as referring to the perception of speech at the sublexical level. However, the ultimate goal of these studies is presumably to understand the neural processes supporting the ability to process speech sounds under ecologically valid conditions, that is, situations in which successful speech sound processing ultimately leads to contact with the mental lexicon and auditory comprehension."[36] This however creates the problem of " a tenuous connection to their implicit target of investigation, speech recognition".[36]

Imitation[edit]

The motor theory of speech perception faces the problem that the research linking speech perception to speech production is also consistent with the brain processing speech to imitate spoken words. The brain must have a means to do this if language is to exist, since a child's vocabulary expansion requires a means to learn novel spoken words, as does an adult's picking up of new names.[6] Imitation has to be initiated for all vocalizations since a word's novelty cannot be known until after it is heard, and so after when the information needed to identify its articulation gestures and motor goals has gone. As result vocal imitation needs to be initiated by default into short term memory for every heard spoken vocalizations.[6] If speech perception uses multiple sources of information, this default imitation processing would provide as a secondary use an extra source for word perception. Since imitation will be most needed for vocalizations that are not proper words, this could explain why sublexical tasks that do not use proper words so strongly link to processing of motor gestures.[6]

Birds[edit]

It has been suggested that birds also hear each other's bird song in terms of vocal gestures.[37]

See also[edit]

References[edit]

- ^ a b c d Liberman, A. M.; Cooper, F. S.; Shankweiler, D. P.; Studdert-Kennedy, M. (1967). "Perception of the speech code". Psychological Review. 74 (6): 431–461. doi:10.1037/h0020279. PMID 4170865.

- ^ Liberman, A. M.; Mattingly, I. G. (1985). "The motor theory of speech perception revised". Cognition. 21 (1): 1–36. CiteSeerX 10.1.1.330.220. doi:10.1016/0010-0277(85)90021-6. PMID 4075760.

- ^ Liberman, A. M.; Mattingly, I. G. (1989). "A specialization for speech perception". Science. 243 (4890): 489–494. doi:10.1126/science.2643163. PMID 2643163.

- ^ Liberman, A. M.; Whalen, D. H. (2000). "On the relation of speech to language". Trends in Cognitive Sciences. 4 (5): 187–196. doi:10.1016/S1364-6613(00)01471-6. PMID 10782105.

- ^ a b c d e f g Galantucci, B.; Fowler, C. A.; Turvey, M. T. (2006). "The motor theory of speech perception reviewed". Psychonomic Bulletin & Review. 13 (3): 361–377. doi:10.3758/bf03193857. PMC 2746041. PMID 17048719.

- ^ a b c d Skoyles, J. R. (1998). "Speech phones are a replication code". Medical Hypotheses. 50 (2): 167–173. doi:10.1016/S0306-9877(98)90203-1. PMID 9572572.

- ^ Liberman, A. M. (1996). Speech: A special code. Cambridge, MA: MIT Press. ISBN 978-0-262-12192-7

- ^ Liberman, A. M.; Delattre, P.; Cooper, F. S. (1952). "The role of selected stimulus-variables in the perception of the unvoiced stop consonants". The American Journal of Psychology. 65 (4): 497–516. doi:10.2307/1418032. JSTOR 1418032. PMID 12996688.

- ^ a b c Liberman, A. M.; Delattre, P. C.; Cooper, F. S.; Gerstman, L. J. (1954). "The role of consonant-vowel transitions in the perception of the stop and nasal consonants". Psychological Monographs: General and Applied. 68 (8): 1–13. doi:10.1037/h0093673. PDF

- ^ Fowler, C. A.; Saltzman, E. (1993). "Coordination and coarticulation in speech production". Language and Speech. 36 ( Pt 2-3) (2–3): 171–195. doi:10.1177/002383099303600304. PMID 8277807. PDF

- ^ Liberman, A. M.; Isenberg, D.; Rakerd, B. (1981). "Duplex perception of cues for stop consonants: Evidence for a phonetic mode". Perception & Psychophysics. 30 (2): 133–143. doi:10.3758/bf03204471. PMID 7301513.

- ^ Liberman, A. M. (1970). "The grammars of speech and language" (PDF). Cognitive Psychology. 1 (4): 301–323. doi:10.1016/0010-0285(70)90018-6.

- ^ Liberman, A. M.; Mattingly, I. G. (1985). "The motor theory of speech perception revised" (PDF). Cognition. 21 (1): 1–36. CiteSeerX 10.1.1.330.220. doi:10.1016/0010-0277(85)90021-6. PMID 4075760.

- ^ a b Fowler, C. A.; Rosenblum, L. D. (1990). "Duplex perception: A comparison of monosyllables and slamming doors". Journal of Experimental Psychology. Human Perception and Performance. 16 (4): 742–754. doi:10.1037/0096-1523.16.4.742. PMID 2148589.

- ^ Massaro, D. W.; Chen, T. H. (2008). "The motor theory of speech perception revisited". Psychonomic Bulletin & Review. 15 (2): 453–457, discussion 457–62. doi:10.3758/pbr.15.2.453. PMID 18488668.

- ^ MacLeod, A.; Summerfield, Q. (1987). "Quantifying the contribution of vision to speech perception in noise". British Journal of Audiology. 21 (2): 131–141. doi:10.3109/03005368709077786. PMID 3594015.

- ^ Fowler, C. A.; Dekle, D. J. (1991). "Listening with eye and hand: Cross-modal contributions to speech perception". Journal of Experimental Psychology. Human Perception and Performance. 17 (3): 816–828. doi:10.1037/0096-1523.17.3.816. PMID 1834793.

- ^ Nygaard LC, Pisoni DB (1995). "Speech Perception: New Directions in Research and Theory". In J.L. Miller, P.D. Eimas. Handbook of Perception and Cognition: Speech, Language, and Communication. San Diego: Academic Press. ISBN 978-0-12-497770-9.

- ^ Liberman, A. M.; Harris, K. S.; Hoffman, H. S.; Griffith, B. C. (1957). "The discrimination of speech sounds within and across phoneme boundaries". Journal of Experimental Psychology. 54 (5): 358–368. doi:10.1037/h0044417. PMID 13481283.

- ^ Marslen-Wilson, W. (1973). "Linguistic structure and speech shadowing at very short latencies". Nature. 244 (5417): 522–523. doi:10.1038/244522a0. PMID 4621131.

- ^ Porter Jr, R. J.; Lubker, J. F. (1980). "Rapid reproduction of vowel-vowel sequences: Evidence for a fast and direct acoustic-motoric linkage in speech". Journal of Speech and Hearing Research. 23 (3): 593–602. doi:10.1044/jshr.2303.593. PMID 7421161.

- ^ Fadiga, L.; Craighero, L.; Buccino, G.; Rizzolatti, G. (2002). "Speech listening specifically modulates the excitability of tongue muscles: A TMS study". The European Journal of Neuroscience. 15 (2): 399–402. CiteSeerX 10.1.1.169.4261. doi:10.1046/j.0953-816x.2001.01874.x. PMID 11849307.

- ^ Watkins, K. E.; Strafella, A. P.; Paus, T. (2003). "Seeing and hearing speech excites the motor system involved in speech production". Neuropsychologia. 41 (8): 989–994. doi:10.1016/s0028-3932(02)00316-0. PMID 12667534.

- ^ Wilson, S. M.; Saygin, A. E. P.; Sereno, M. I.; Iacoboni, M. (2004). "Listening to speech activates motor areas involved in speech production". Nature Neuroscience. 7 (7): 701–702. doi:10.1038/nn1263. PMID 15184903.

- ^ Skipper, J. I.; Van Wassenhove, V.; Nusbaum, H. C.; Small, S. L. (2006). "Hearing Lips and Seeing Voices: How Cortical Areas Supporting Speech Production Mediate Audiovisual Speech Perception". Cerebral Cortex. 17 (10): 2387–2399. doi:10.1093/cercor/bhl147. PMC 2896890. PMID 17218482.

- ^ Meister, I. G.; Wilson, S. M.; Deblieck, C.; Wu, A. D.; Iacoboni, M. (2007). "The Essential Role of Premotor Cortex in Speech Perception". Current Biology. 17 (19): 1692–1696. doi:10.1016/j.cub.2007.08.064. PMC 5536895. PMID 17900904.

- ^ Pulvermuller, F.; Huss, M.; Kherif, F.; Moscoso del Prado Martin F; Hauk, O.; Shtyrov, Y. (2006). "Motor cortex maps articulatory features of speech sounds". Proceedings of the National Academy of Sciences. 103 (20): 7865–7870. doi:10.1073/pnas.0509989103. PMC 1472536. PMID 16682637.

- ^ d'Ausilio, A.; Pulvermüller, F.; Salmas, P.; Bufalari, I.; Begliomini, C.; Fadiga, L. (2009). "The Motor Somatotopy of Speech Perception". Current Biology. 19 (5): 381–385. doi:10.1016/j.cub.2009.01.017. PMID 19217297.

- ^ Assaneo, M. Florencia; Poeppel, David (2018). "The coupling between auditory and motor cortices is rate-restricted: Evidence for an intrinsic speech-motor rhythm". Science Advances. 4 (2): eaao3842. doi:10.1126/sciadv.aao3842. PMC 5810610. PMID 29441362.

- ^ Rizzolatti, G.; Craighero, L. (2004). "The Mirror-Neuron System". Annual Review of Neuroscience. 27: 169–192. doi:10.1146/annurev.neuro.27.070203.144230. PMID 15217330. PDF

- ^ Hommel, B.; Müsseler, J.; Aschersleben, G.; Prinz, W. (2001). "The Theory of Event Coding (TEC): A framework for perception and action planning". The Behavioral and Brain Sciences. 24 (5): 849–878, discussion 878–937. doi:10.1017/s0140525x01000103. PMID 12239891.

- ^ a b Massaro, D. W. (1997). Perceiving talking faces: From speech perception to a behavioral principle. Cambridge, MA: MIT Press. ISBN 978-0-262-13337-1.

- ^ Lane, H (1965). "The Motor Theory of Speech Perception: A Critical Review". Psychological Review. 72 (4): 275–309. doi:10.1037/h0021986. PMID 14348425.

- ^ Tsao, F. M.; Liu, H. M.; Kuhl, P. K. (2004). "Speech perception in infancy predicts language development in the second year of life: A longitudinal study". Child Development. 75 (4): 1067–84. doi:10.1111/j.1467-8624.2004.00726.x. PMID 15260865.

- ^ MacNeilage, P. F.; Rootes, T. P.; Chase, R. A. (1967). "Speech production and perception in a patient with severe impairment of somesthetic perception and motor control". Journal of Speech and Hearing Research. 10 (3): 449–67. PMID 6081929.

- ^ a b Hickok, G.; Poeppel, D. (2007). "The cortical organization of speech processing". Nature Reviews Neuroscience. 8 (5): 393–402. doi:10.1038/nrn2113. PMID 17431404. See page 394

- ^ Williams, H.; Nottebohm, F. (1985). "Auditory responses in avian vocal motor neurons: A motor theory for song perception in birds". Science. 229 (4710): 279–282. doi:10.1126/science.4012321. PMID 4012321.