Ensemble learning

| Machine learning and data mining |

|---|

|

|

Machine-learning venues |

In statistics and machine learning, ensemble methods use multiple learning algorithms to obtain better predictive performance than could be obtained from any of the constituent learning algorithms alone.[1][2][3] Unlike a statistical ensemble in statistical mechanics, which is usually infinite, a machine learning ensemble consists of only a concrete finite set of alternative models, but typically allows for much more flexible structure to exist among those alternatives.

Contents

Overview[edit]

Supervised learning algorithms are most commonly described[by whom?] as performing the task of searching through a hypothesis space to find a suitable hypothesis that will make good predictions with a particular problem[citation needed]. Even if the hypothesis space contains hypotheses that are very well-suited for a particular problem, it may be very difficult to find a good one. Ensembles combine multiple hypotheses to form a (hopefully) better hypothesis. The term ensemble is usually reserved for methods that generate multiple hypotheses using the same base learner[according to whom?]. The broader term of multiple classifier systems also covers hybridization of hypotheses that are not induced by the same base learner[citation needed].

Evaluating the prediction of an ensemble typically requires more computation than evaluating the prediction of a single model, so ensembles may be thought of as a way to compensate for poor learning algorithms by performing a lot of extra computation. Fast algorithms such as decision trees are commonly used in ensemble methods (for example, random forests), although slower algorithms can benefit from ensemble techniques as well.

By analogy, ensemble techniques have been used also in unsupervised learning scenarios, for example in consensus clustering or in anomaly detection.

Ensemble theory[edit]

An ensemble is itself a supervised learning algorithm, because it can be trained and then used to make predictions. The trained ensemble, therefore, represents a single hypothesis. This hypothesis, however, is not necessarily contained within the hypothesis space of the models from which it is built. Thus, ensembles can be shown to have more flexibility in the functions they can represent. This flexibility can, in theory, enable them to over-fit the training data more than a single model would, but in practice, some ensemble techniques (especially bagging) tend to reduce problems related to over-fitting of the training data[citation needed].

Empirically, ensembles tend to yield better results when there is a significant diversity among the models.[4][5] Many ensemble methods, therefore, seek to promote diversity among the models they combine.[6][7] Although perhaps non-intuitive, more random algorithms (like random decision trees) can be used to produce a stronger ensemble than very deliberate algorithms (like entropy-reducing decision trees).[8] Using a variety of strong learning algorithms, however, has been shown to be more effective than using techniques that attempt to dumb-down the models in order to promote diversity.[9]

Ensemble size[edit]

While the number of component classifiers of an ensemble has a great impact on the accuracy of prediction, there is a limited number of studies addressing this problem. A priori determining of ensemble size and the volume and velocity of big data streams make this even more crucial for online ensemble classifiers. Mostly statistical tests were used for determining the proper number of components. More recently, a theoretical framework suggested that there is an ideal number of component classifiers for an ensemble such that having more or less than this number of classifiers would deteriorate the accuracy. It is called "the law of diminishing returns in ensemble construction." Their theoretical framework shows that using the same number of independent component classifiers as class labels gives the highest accuracy.[10] [11]

Common types of ensembles[edit]

Bayes optimal classifier[edit]

The Bayes Optimal Classifier is a classification technique. It is an ensemble of all the hypotheses in the hypothesis space. On average, no other ensemble can outperform it.[12] Naive Bayes Optimal Classifier is a version of this that assumes that the data is conditionally independent on the class and makes the computation more feasible. Each hypothesis is given a vote proportional to the likelihood that the training dataset would be sampled from a system if that hypothesis were true. To facilitate training data of finite size, the vote of each hypothesis is also multiplied by the prior probability of that hypothesis. The Bayes Optimal Classifier can be expressed with the following equation:

where is the predicted class, is the set of all possible classes, is the hypothesis space, refers to a probability, and is the training data. As an ensemble, the Bayes Optimal Classifier represents a hypothesis that is not necessarily in . The hypothesis represented by the Bayes Optimal Classifier, however, is the optimal hypothesis in ensemble space (the space of all possible ensembles consisting only of hypotheses in ).

This formula can be restated using Bayes' theorem, which says that the posterior is proportional to the likelihood times the prior:

hence,

Bootstrap aggregating (bagging)[edit]

Bootstrap aggregating, often abbreviated as bagging, involves having each model in the ensemble vote with equal weight. In order to promote model variance, bagging trains each model in the ensemble using a randomly drawn subset of the training set. As an example, the random forest algorithm combines random decision trees with bagging to achieve very high classification accuracy.[13]

Boosting[edit]

Boosting involves incrementally building an ensemble by training each new model instance to emphasize the training instances that previous models mis-classified. In some cases, boosting has been shown to yield better accuracy than bagging, but it also tends to be more likely to over-fit the training data. By far, the most common implementation of boosting is Adaboost, although some newer algorithms are reported to achieve better results[citation needed].

Bayesian parameter averaging[edit]

Bayesian parameter averaging (BPA) is an ensemble technique that seeks to approximate the Bayes Optimal Classifier by sampling hypotheses from the hypothesis space, and combining them using Bayes' law.[14] Unlike the Bayes optimal classifier, Bayesian model averaging (BMA) can be practically implemented. Hypotheses are typically sampled using a Monte Carlo sampling technique such as MCMC. For example, Gibbs sampling may be used to draw hypotheses that are representative of the distribution . It has been shown that under certain circumstances, when hypotheses are drawn in this manner and averaged according to Bayes' law, this technique has an expected error that is bounded to be at most twice the expected error of the Bayes optimal classifier.[15] Despite the theoretical correctness of this technique, early work showed experimental results suggesting that the method promoted over-fitting and performed worse compared to simpler ensemble techniques such as bagging;[16] however, these conclusions appear to be based on a misunderstanding of the purpose of Bayesian model averaging vs. model combination.[17] Additionally, there have been considerable advances in theory and practice of BMA. Recent rigorous proofs demonstrate the accuracy of BMA in variable selection and estimation in high-dimensional settings,[18] and provide empirical evidence highlighting the role of sparsity-enforcing priors within the BMA in alleviating overfitting.[19]

Bayesian model combination[edit]

Bayesian model combination (BMC) is an algorithmic correction to Bayesian model averaging (BMA). Instead of sampling each model in the ensemble individually, it samples from the space of possible ensembles (with model weightings drawn randomly from a Dirichlet distribution having uniform parameters). This modification overcomes the tendency of BMA to converge toward giving all of the weight to a single model. Although BMC is somewhat more computationally expensive than BMA, it tends to yield dramatically better results. The results from BMC have been shown to be better on average (with statistical significance) than BMA, and bagging.[20]

The use of Bayes' law to compute model weights necessitates computing the probability of the data given each model. Typically, none of the models in the ensemble are exactly the distribution from which the training data were generated, so all of them correctly receive a value close to zero for this term. This would work well if the ensemble were big enough to sample the entire model-space, but such is rarely possible. Consequently, each pattern in the training data will cause the ensemble weight to shift toward the model in the ensemble that is closest to the distribution of the training data. It essentially reduces to an unnecessarily complex method for doing model selection.

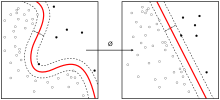

The possible weightings for an ensemble can be visualized as lying on a simplex. At each vertex of the simplex, all of the weight is given to a single model in the ensemble. BMA converges toward the vertex that is closest to the distribution of the training data. By contrast, BMC converges toward the point where this distribution projects onto the simplex. In other words, instead of selecting the one model that is closest to the generating distribution, it seeks the combination of models that is closest to the generating distribution.

The results from BMA can often be approximated by using cross-validation to select the best model from a bucket of models. Likewise, the results from BMC may be approximated by using cross-validation to select the best ensemble combination from a random sampling of possible weightings.

Bucket of models[edit]

A "bucket of models" is an ensemble technique in which a model selection algorithm is used to choose the best model for each problem. When tested with only one problem, a bucket of models can produce no better results than the best model in the set, but when evaluated across many problems, it will typically produce much better results, on average, than any model in the set.

The most common approach used for model-selection is cross-validation selection (sometimes called a "bake-off contest"). It is described with the following pseudo-code:

For each model m in the bucket:

Do c times: (where 'c' is some constant)

Randomly divide the training dataset into two datasets: A, and B.

Train m with A

Test m with B

Select the model that obtains the highest average score

Cross-Validation Selection can be summed up as: "try them all with the training set, and pick the one that works best".[21]

Gating is a generalization of Cross-Validation Selection. It involves training another learning model to decide which of the models in the bucket is best-suited to solve the problem. Often, a perceptron is used for the gating model. It can be used to pick the "best" model, or it can be used to give a linear weight to the predictions from each model in the bucket.

When a bucket of models is used with a large set of problems, it may be desirable to avoid training some of the models that take a long time to train. Landmark learning is a meta-learning approach that seeks to solve this problem. It involves training only the fast (but imprecise) algorithms in the bucket, and then using the performance of these algorithms to help determine which slow (but accurate) algorithm is most likely to do best.[22]

Stacking[edit]

Stacking (sometimes called stacked generalization) involves training a learning algorithm to combine the predictions of several other learning algorithms. First, all of the other algorithms are trained using the available data, then a combiner algorithm is trained to make a final prediction using all the predictions of the other algorithms as additional inputs. If an arbitrary combiner algorithm is used, then stacking can theoretically represent any of the ensemble techniques described in this article, although, in practice, a logistic regression model is often used as the combiner.

Stacking typically yields performance better than any single one of the trained models.[23] It has been successfully used on both supervised learning tasks (regression,[24] classification and distance learning [25]) and unsupervised learning (density estimation).[26] It has also been used to estimate bagging's error rate.[3][27] It has been reported to out-perform Bayesian model-averaging.[28] The two top-performers in the Netflix competition utilized blending, which may be considered to be a form of stacking.[29]

Implementations in statistics packages[edit]

- R: at least three packages offer Bayesian model averaging tools,[30] including the BMS (an acronym for Bayesian Model Selection) package,[31] the BAS (an acronym for Bayesian Adaptive Sampling) package,[32] and the BMA package.[33] The H2O-package offers a lot of machine learning models including an ensembling model, which can also be trained using Spark.

- Python: Scikit-learn, a package for machine learning in Python offers packages for ensemble learning including packages for bagging and averaging methods.

- MATLAB: classification ensembles are implemented in Statistics and Machine Learning Toolbox.[34]

Ensemble learning applications[edit]

In the recent years, due to the growing computational power which allows training large ensemble learning in a reasonable time frame, the number of its applications has grown increasingly.[35] Some of the applications of ensemble classifiers include:

Remote sensing[edit]

Land cover mapping[edit]

Land cover mapping is one of the major applications of Earth observation satellite sensors, using remote sensing and geospatial data, to identify the materials and objects which are located on the surface of target areas. Generally, the classes of target materials include roads, buildings, rivers, lakes, and vegetation.[36] Some different ensemble learning approaches based on artificial neural networks[37], kernel principal component analysis (KPCA)[38], decision trees with Boosting[39], random forest[36] and automatic design of multiple classifier systems[40], are proposed to efficiently identify land cover objects.

Change detection[edit]

Change detection is an image analysis problem, consisting of the identification of places where the land cover has changed over time. Change detection is widely used in fields such as urban growth, forest and vegetation dynamics, land use and disaster monitoring.[41] The earliest applications of ensemble classifiers in change detection are designed with the majority voting, Bayesian average and the maximum posterior probability.[42]

Computer security[edit]

Distributed denial of service[edit]

Distributed denial of service is one of the most threatening cyber-attacks that may happen to an internet service provider.[35] By combining the output of single classifiers, ensemble classifiers reduce the total error of detecting and discriminating such attacks from legitimate flash crowds.[43]

Malware Detection[edit]

Classification of malware codes such as computer viruses, computer worms, trojans, ransomware and spywares with the usage of Machine Learning techniques, is inspired by the document categorization problem.[44] Ensemble learning systems have shown a proper efficacy in this area.[45][46]

Intrusion detection[edit]

An Intrusion detection system monitors computer network or computer systems to identify intruder codes like an Anomaly detection process. Ensemble learning successfully aids such monitoring systems to reduce their total error.[47][48]

Face recognition[edit]

Face recognition, which recently has become one of the most popular research areas of pattern recognition, copes with identification or verification of a person by his/her digital images.[49]

Hierarchical ensembles based on Gabor Fisher classifier and Independent component analysis preprocessing techniques are some of the earliest ensembles employed in this field.[50] [51][52]

Emotion recognition[edit]

While speech recognition is mainly based on Deep Learning because most of the industry players in this field like Google, Microsoft and IBM reveal that the core technology of their speech recognition is based on this approach, speech-based emotion recognition can also have a satisfactory performance with ensemble learning.[53] [54]

It is also being successfully used in Facial Emotion Recognition.[55][56][57]

Fraud detection[edit]

Fraud detection deals with the identification of bank fraud, such as money laundering, credit card fraud and telecommunication fraud, which have vast domains of research and applications of Machine Learning. Because ensemble learning improves the robustness of the normal behavior modelling, it has been proposed as an efficient technique to detect such fraudulent cases and activities in banking and credit card systems.[58][59]

Financial decision-making[edit]

The accuracy of prediction of business failure is a very crucial issue in financial decision-making. Therefore, different ensemble classifiers are proposed to predict financial crises and financial distress.[60] Also, in the trade-based manipulation problem, where traders attempt to manipulate stock prices by buying and selling activities, ensemble classifiers are required to analyze the changes in the stock market data and detect suspicious symptom of stock price manipulation.[61]

Medicine[edit]

Ensemble classifiers have been successfully applied in Neuroscience, Proteomics and medical diagnosis. Like in neuro-cognitive disorder (i.e. Alzheimer or myotonic dystrophy) detection based on MRI datasets[62][63][64]

See also[edit]

References[edit]

- ^ Opitz, D.; Maclin, R. (1999). "Popular ensemble methods: An empirical study". Journal of Artificial Intelligence Research. 11: 169–198. doi:10.1613/jair.614.

- ^ Polikar, R. (2006). "Ensemble based systems in decision making". IEEE Circuits and Systems Magazine. 6 (3): 21–45. doi:10.1109/MCAS.2006.1688199.

- ^ a b Rokach, L. (2010). "Ensemble-based classifiers". Artificial Intelligence Review. 33 (1–2): 1–39. doi:10.1007/s10462-009-9124-7.

- ^ Kuncheva, L. and Whitaker, C., Measures of diversity in classifier ensembles, Machine Learning, 51, pp. 181-207, 2003

- ^ Sollich, P. and Krogh, A., Learning with ensembles: How overfitting can be useful, Advances in Neural Information Processing Systems, volume 8, pp. 190-196, 1996.

- ^ Brown, G. and Wyatt, J. and Harris, R. and Yao, X., Diversity creation methods: a survey and categorisation., Information Fusion, 6(1), pp.5-20, 2005.

- ^ Accuracy and Diversity in Ensembles of Text Categorisers. J. J. García Adeva, Ulises Cerviño, and R. Calvo, CLEI Journal, Vol. 8, No. 2, pp. 1 - 12, December 2005.

- ^ Ho, T., Random Decision Forests, Proceedings of the Third International Conference on Document Analysis and Recognition, pp. 278-282, 1995.

- ^ Gashler, M. and Giraud-Carrier, C. and Martinez, T., Decision Tree Ensemble: Small Heterogeneous Is Better Than Large Homogeneous, The Seventh International Conference on Machine Learning and Applications, 2008, pp. 900-905., DOI 10.1109/ICMLA.2008.154

- ^ R. Bonab, Hamed; Can, Fazli (2016). A Theoretical Framework on the Ideal Number of Classifiers for Online Ensembles in Data Streams. CIKM. USA: ACM. p. 2053.

- ^ R. Bonab, Hamed; Can, Fazli (2017). Less Is More: A Comprehensive Framework for the Number of Components of Ensemble Classifiers (PDF). TNNLS. USA: IEEE.

- ^ Tom M. Mitchell, Machine Learning, 1997, pp. 175

- ^ Breiman, L., Bagging Predictors, Machine Learning, 24(2), pp.123-140, 1996.

- ^ Hoeting, J. A.; Madigan, D.; Raftery, A. E.; Volinsky, C. T. (1999). "Bayesian Model Averaging: A Tutorial". Statistical Science. 14 (4): 382–401. doi:10.2307/2676803. JSTOR 2676803.

- ^ David Haussler, Michael Kearns, and Robert E. Schapire. Bounds on the sample complexity of Bayesian learning using information theory and the VC dimension. Machine Learning, 14:83–113, 1994

- ^ Domingos, Pedro (2000). Bayesian averaging of classifiers and the overfitting problem (PDF). Proceedings of the 17th International Conference on Machine Learning (ICML). pp. 223–-230.

- ^ Minka, Thomas (2002), Bayesian model averaging is not model combination (PDF)

- ^ Castillo, I.; Schmidt-Hieber, J.; van der Vaart, A. (2015). "Bayesian linear regression with sparse priors". Annals of Statistics. 43 (5): 1986–2018. arXiv:1403.0735. doi:10.1214/15-AOS1334.

- ^ Hernández-Lobato, D.; Hernández-Lobato, J. M.; Dupont, P. (2013). "Generalized Spike-and-Slab Priors for Bayesian Group Feature Selection Using Expectation Propagation" (PDF). Journal of Machine Learning Research. 14: 1891–1945.

- ^ Monteith, Kristine; Carroll, James; Seppi, Kevin; Martinez, Tony. (2011). Turning Bayesian Model Averaging into Bayesian Model Combination (PDF). Proceedings of the International Joint Conference on Neural Networks IJCNN'11. pp. 2657–2663.

- ^ Saso Dzeroski, Bernard Zenko, Is Combining Classifiers Better than Selecting the Best One, Machine Learning, 2004, pp. 255--273

- ^ Bensusan, Hilan and Giraud-Carrier, Christophe G., Discovering Task Neighbourhoods Through Landmark Learning Performances, PKDD '00: Proceedings of the 4th European Conference on Principles of Data Mining and Knowledge Discovery, Springer-Verlag, 2000, pages 325--330

- ^ Wolpert, D., Stacked Generalization., Neural Networks, 5(2), pp. 241-259., 1992

- ^ Breiman, L., Stacked Regression, Machine Learning, 24, 1996 doi:10.1007/BF00117832

- ^ Ozay, M.; Yarman Vural, F. T. (2013). "A New Fuzzy Stacked Generalization Technique and Analysis of its Performance". arXiv:1204.0171. Bibcode:2012arXiv1204.0171O.

- ^ Smyth, P. and Wolpert, D. H., Linearly Combining Density Estimators via Stacking, Machine Learning Journal, 36, 59-83, 1999

- ^ Wolpert, D.H., and Macready, W.G., An Efficient Method to Estimate Bagging’s Generalization Error, Machine Learning Journal, 35, 41-55, 1999

- ^ Clarke, B., Bayes model averaging and stacking when model approximation error cannot be ignored, Journal of Machine Learning Research, pp 683-712, 2003

- ^ Sill, J.; Takacs, G.; Mackey, L.; Lin, D. (2009). "Feature-Weighted Linear Stacking". arXiv:0911.0460. Bibcode:2009arXiv0911.0460S.

- ^ Amini, Shahram M.; Parmeter, Christopher F. (2011). "Bayesian model averaging in R" (PDF). Journal of Economic and Social Measurement. 36 (4): 253–287.

- ^ "BMS: Bayesian Model Averaging Library". The Comprehensive R Archive Network. Retrieved September 9, 2016.

- ^ "BAS: Bayesian Model Averaging using Bayesian Adaptive Sampling". The Comprehensive R Archive Network. Retrieved September 9, 2016.

- ^ "BMA: Bayesian Model Averaging". The Comprehensive R Archive Network. Retrieved September 9, 2016.

- ^ "Classification Ensembles". MATLAB & Simulink. Retrieved June 8, 2017.

- ^ a b Woźniak, Michał; Graña, Manuel; Corchado, Emilio (March 2014). "A survey of multiple classifier systems as hybrid systems". Information Fusion. 16: 3–17. doi:10.1016/j.inffus.2013.04.006.

- ^ a b Rodriguez-Galiano, V.F.; Ghimire, B.; Rogan, J.; Chica-Olmo, M.; Rigol-Sanchez, J.P. (January 2012). "An assessment of the effectiveness of a random forest classifier for land-cover classification". ISPRS Journal of Photogrammetry and Remote Sensing. 67: 93–104. Bibcode:2012JPRS...67...93R. doi:10.1016/j.isprsjprs.2011.11.002.

- ^ Giacinto, Giorgio; Roli, Fabio (August 2001). "Design of effective neural network ensembles for image classification purposes". Image and Vision Computing. 19 (9–10): 699–707. doi:10.1016/S0262-8856(01)00045-2.

- ^ Xia, Junshi; Yokoya, Naoto; Iwasaki, Yakira (March 2017). "A novel ensemble classifier of hyperspectral and LiDAR data using morphological features". 2017 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP): 6185–6189. doi:10.1109/ICASSP.2017.7953345.

- ^ Mochizuki, S.; Murakami, T. (November 2012). "Accuracy comparison of land cover mapping using the object-oriented image classification with machine learning algorithms". 33rd Asian Conference on Remote Sensing 2012, ACRS 2012. 1: 126–133.

- ^ Giacinto, G.; Roli, F.; Fumera, G. (September 2000). "Design of effective multiple classifier systems by clustering of classifiers". Proceedings 15th International Conference on Pattern Recognition. ICPR-2000: 160–163. doi:10.1109/ICPR.2000.906039.

- ^ Du, Peijun; Liu, Sicong; Xia, Junshi; Zhao, Yindi (January 2013). "Information fusion techniques for change detection from multi-temporal remote sensing images". Information Fusion. 14 (1): 19–27. doi:10.1016/j.inffus.2012.05.003.

- ^ Bruzzone, Lorenzo; Cossu, Roberto; Vernazza, Gianni (December 2002). "Combining parametric and non-parametric algorithms for a partially unsupervised classification of multitemporal remote-sensing images". Information Fusion. 3 (4): 289–297. doi:10.1016/S1566-2535(02)00091-X.

- ^ Raj Kumar, P. Arun; Selvakumar, S. (July 2011). "Distributed denial of service attack detection using an ensemble of neural classifier". Computer Communications. 34 (11): 1328–1341. doi:10.1016/j.comcom.2011.01.012.

- ^ Shabtai, Asaf; Moskovitch, Robert; Elovici, Yuval; Glezer, Chanan (February 2009). "Detection of malicious code by applying machine learning classifiers on static features: A state-of-the-art survey". Information Security Technical Report. 14 (1): 16–29. doi:10.1016/j.istr.2009.03.003.

- ^ Zhang, Boyun; Yin, Jianping; Hao, Jingbo; Zhang, Dingxing; Wang, Shulin (2007). "Malicious Codes Detection Based on Ensemble Learning". Autonomic and Trusted Computing: 468–477. doi:10.1007/978-3-540-73547-2_48.

- ^ Menahem, Eitan; Shabtai, Asaf; Rokach, Lior; Elovici, Yuval (February 2009). "Improving malware detection by applying multi-inducer ensemble". Computational Statistics & Data Analysis. 53 (4): 1483–1494. doi:10.1016/j.csda.2008.10.015.

- ^ Locasto, Michael E.; Wang, Ke; Keromytis, Angeles D.; Salvatore, J. Stolfo (2005). "FLIPS: Hybrid Adaptive Intrusion Prevention". Recent Advances in Intrusion Detection: 82–101. doi:10.1007/11663812_5.

- ^ Giacinto, Giorgio; Perdisci, Roberto; Del Rio, Mauro; Roli, Fabio (January 2008). "Intrusion detection in computer networks by a modular ensemble of one-class classifiers". Information Fusion. 9 (1): 69–82. doi:10.1016/j.inffus.2006.10.002.

- ^ Mu, Xiaoyan; Lu, Jiangfeng; Watta, Paul; Hassoun, Mohamad H. (July 2009). "Weighted voting-based ensemble classifiers with application to human face recognition and voice recognition". 2009 International Joint Conference on Neural Networks. doi:10.1109/IJCNN.2009.5178708.

- ^ Yu, Su; Shan, Shiguang; Chen, Xilin; Gao, Wen (April 2006). "Hierarchical ensemble of Gabor Fisher classifier for face recognition". Automatic Face and Gesture Recognition, 2006. FGR 2006. 7th International Conference on Automatic Face and Gesture Recognition (FGR06): 91–96. doi:10.1109/FGR.2006.64.

- ^ Su, Y.; Shan, S.; Chen, X.; Gao, W. (September 2006). "Patch-based gabor fisher classifier for face recognition". Proceedings - International Conference on Pattern Recognition. 2: 528–531. doi:10.1109/ICPR.2006.917.

- ^ Liu, Yang; Lin, Yongzheng; Chen, Yuehui (July 2008). "Ensemble Classification Based on ICA for Face Recognition". Proceedings - 1st International Congress on Image and Signal Processing, IEEE Conference, CISP 2008: 144–148. doi:10.1109/CISP.2008.581.

- ^ Rieger, Steven A.; Muraleedharan, Rajani; Ramachandran, Ravi P. (2014). "Speech based emotion recognition using spectral feature extraction and an ensemble of kNN classifiers". Proceedings of the 9th International Symposium on Chinese Spoken Language Processing, ISCSLP 2014: 589–593. doi:10.1109/ISCSLP.2014.6936711.

- ^ Krajewski, Jarek; Batliner, Anton; Kessel, Silke (October 2010). "Comparing Multiple Classifiers for Speech-Based Detection of Self-Confidence - A Pilot Study". 2010 20th International Conference on Pattern Recognition: 3716–3719. doi:10.1109/ICPR.2010.905.

- ^ Rani, P. Ithaya; Muneeswaran, K. (25 May 2016). "Recognize the facial emotion in video sequences using eye and mouth temporal Gabor features". Multimedia Tools and Applications. 76 (7): 10017–10040. doi:10.1007/s11042-016-3592-y.

- ^ Rani, P. Ithaya; Muneeswaran, K. (August 2016). "Facial Emotion Recognition Based on Eye and Mouth Regions". International Journal of Pattern Recognition and Artificial Intelligence. 30 (07): 1655020. doi:10.1142/S021800141655020X.

- ^ Rani, P. Ithaya; Muneeswaran, K (28 March 2018). "Emotion recognition based on facial components". Sādhanā. 43 (3). doi:10.1007/s12046-018-0801-6.

- ^ Louzada, Francisco; Ara, Anderson (October 2012). "Bagging k-dependence probabilistic networks: An alternative powerful fraud detection tool". Expert Systems with Applications. 39 (14): 11583–11592. doi:10.1016/j.eswa.2012.04.024.

- ^ Sundarkumar, G. Ganesh; Ravi, Vadlamani (January 2015). "A novel hybrid undersampling method for mining unbalanced datasets in banking and insurance". Engineering Applications of Artificial Intelligence. 37: 368–377. doi:10.1016/j.engappai.2014.09.019.

- ^ Kim, Yoonseong; Sohn, So Young (August 2012). "Stock fraud detection using peer group analysis". Expert Systems with Applications. 39 (10): 8986–8992. doi:10.1016/j.eswa.2012.02.025.

- ^ Kim, Yoonseong; Sohn, So Young (August 2012). "Stock fraud detection using peer group analysis". Expert Systems with Applications. 39 (10): 8986–8992. doi:10.1016/j.eswa.2012.02.025.

- ^ Savio, A.; García-Sebastián, M.T.; Chyzyk, D.; Hernandez, C.; Graña, M.; Sistiaga, A.; López de Munain, A.; Villanúa, J. (August 2011). "Neurocognitive disorder detection based on feature vectors extracted from VBM analysis of structural MRI". Computers in Biology and Medicine. 41 (8): 600–610. doi:10.1016/j.compbiomed.2011.05.010.

- ^ Ayerdi, B.; Savio, A.; Graña, M. (June 2013). "Meta-ensembles of classifiers for Alzheimer's disease detection using independent ROI features". Lecture Notes in Computer Science (including subseries Lecture Notes in Artificial Intelligence and Lecture Notes in Bioinformatics) (Part 2): 122–130. doi:10.1007/978-3-642-38622-0_13.

- ^ Gu, Quan; Ding, Yong-Sheng; Zhang, Tong-Liang (April 2015). "An ensemble classifier based prediction of G-protein-coupled receptor classes in low homology". Neurocomputing. 154: 110–118. doi:10.1016/j.neucom.2014.12.013.

Further reading[edit]

- Zhou Zhihua (2012). Ensemble Methods: Foundations and Algorithms. Chapman and Hall/CRC. ISBN 978-1-439-83003-1.

- Robert Schapire; Yoav Freund (2012). Boosting: Foundations and Algorithms. MIT. ISBN 978-0-262-01718-3.

External links[edit]

- Robi Polikar (ed.). "Ensemble learning". Scholarpedia.

- The Waffles (machine learning) toolkit contains implementations of Bagging, Boosting, Bayesian Model Averaging, Bayesian Model Combination, Bucket-of-models, and other ensemble techniques