Sentence embedding

| Machine learning and data mining |

|---|

|

|

Machine-learning venues |

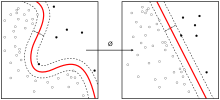

Sentence embedding is the collective name for a set of techniques in natural language processing (NLP) where sentences are mapped to vectors of real numbers [1] [2] [3] [4] [5] [6] [7] .

Application[edit]

Sentence embedding is used by the machine learning software libraries PyTorch and TensorFlow[8]

Evaluation[edit]

A way of testing sentence encodings is to apply them on Sentences Involving Compositional Knowledge (SICK) corpus[9] for both entailment (SICK-E) and relatedness (SICK-R).

In [10] the best results are obtained using a BiLSTM network trained on the Stanford Natural Language Inference (SNLI) Corpus. The Pearson correlation coefficient for SICK-R is 0.885 and the result for SICK-E is 86.3. A slight improvement over previous scores is presented in [11]: SICK-R: 0.888 and SICK-E: 87.8 using a concatenation of bidirectional Gated recurrent unit.

See also[edit]

External links[edit]

InferSent sentence embeddings and training code

Learning General Purpose Distributed Sentence Representations via Large Scale Multi-task Learning

References[edit]

- ^ Paper Summary: Evaluation of sentence embeddings in downstream and linguistic probing tasks

- ^ The Current Best of Universal Word Embeddings and Sentence Embeddings

- ^ Daniel Cer, Yinfei Yang, Sheng-yi Kong, Nan Hua, Nicole Limtiaco, Rhomni St. John, Noah Constant, Mario Guajardo-Cespedes, Steve Yuan, Chris Tar, Yun-Hsuan Sung, Brian Strope: “Universal Sentence Encoder”, 2018; arXiv:1803.11175.

- ^ Ledell Wu, Adam Fisch, Sumit Chopra, Keith Adams, Antoine Bordes: “StarSpace: Embed All The Things!”, 2017; arXiv:1709.03856.

- ^ Sanjeev Arora, Yingyu Liang, and Tengyu Ma. "A simple but tough-to-beat baseline for sentence embeddings.", 2016; openreview:SyK00v5xx.

- ^ Mircea Trifan, Bogdan Ionescu, Cristian Gadea, and Dan Ionescu. "A graph digital signal processing method for semantic analysis." In Applied Computational Intelligence and Informatics (SACI), 2015 IEEE 10th Jubilee International Symposium on, pp. 187-192. IEEE, 2015; ieee:7208196.

- ^ Pierpaolo Basile, Annalina Caputo, and Giovanni Semeraro. "A study on compositional semantics of words in distributional spaces." In Semantic Computing (ICSC), 2012 IEEE Sixth International Conference on, pp. 154-161. IEEE, 2012; ieee:6337099 .

- ^ Google. "universal-sentence-encoder". TensorFlow Hub. Retrieved 6 October 2018.

- ^ Marco Marelli, Stefano Menini, Marco Baroni, Luisa Bentivogli, Raffaella Bernardi, and Roberto Zamparelli. "A SICK cure for the evaluation of compositional distributional semantic models." In LREC, pp. 216-223. 2014 [1].

- ^ Alexis Conneau, Douwe Kiela, Holger Schwenk, Loic Barrault: “Supervised Learning of Universal Sentence Representations from Natural Language Inference Data”, 2017; arXiv:1705.02364.

- ^ Sandeep Subramanian, Adam Trischler, Yoshua Bengio: “Learning General Purpose Distributed Sentence Representations via Large Scale Multi-task Learning”, 2018; arXiv:1804.00079.